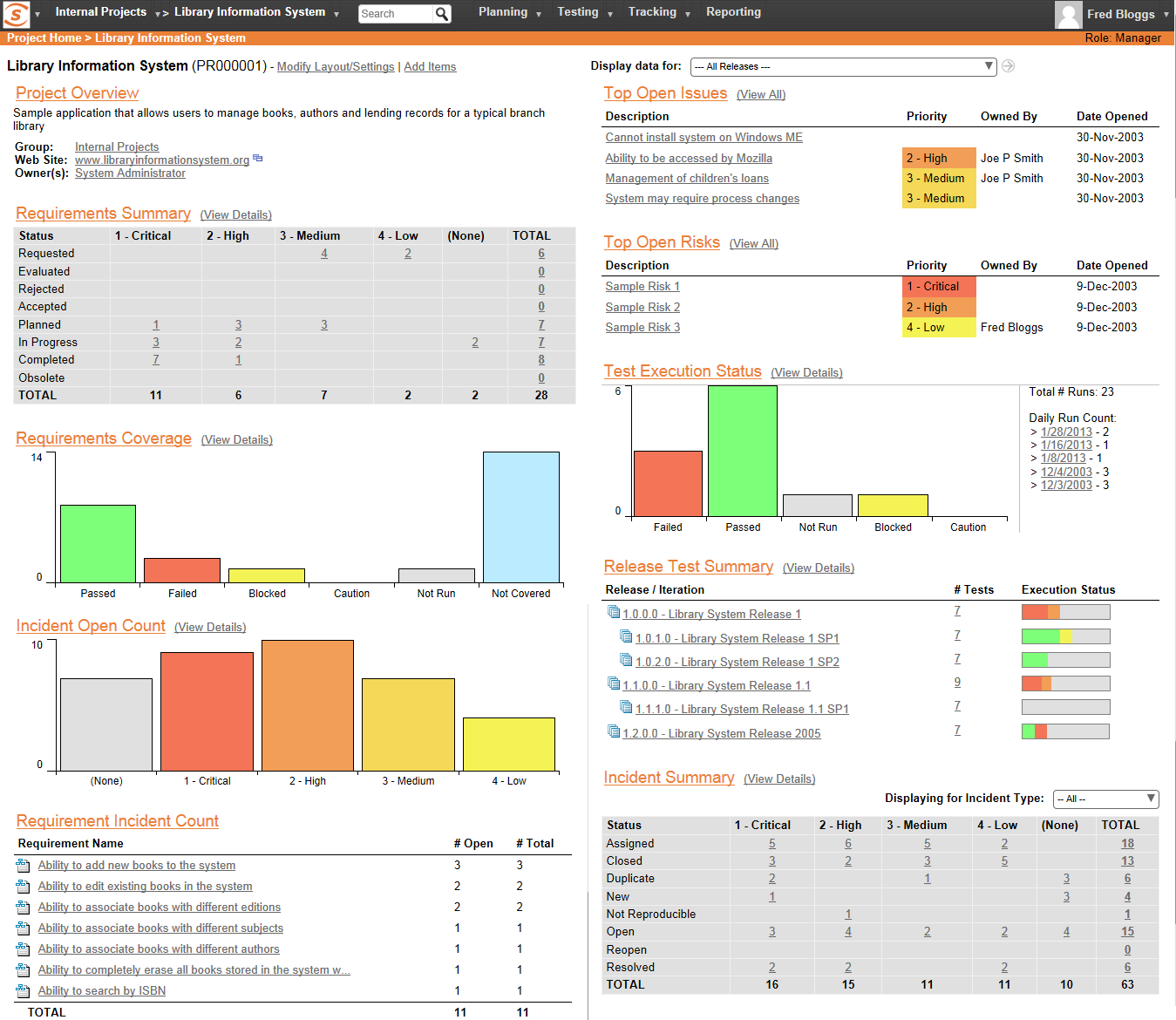

Use Cases and Deployment Scope

We use SpiraPlan (which includes the SpiraTest function) to manage requirements, test cases, incidents, and releases for our medical device products. We use it for all current products, both hardware, and software. We migrated to the SpiraPlan platform from Jazz and at this point, it allows us to accomplish all that Jazz allowed us to do.

Alternatives Considered

IBM Rational DOORS and IBM Rational DOORS Next Generation

Other Software Used

Visual Studio IDE, SOLIDWORKS, AutoCAD