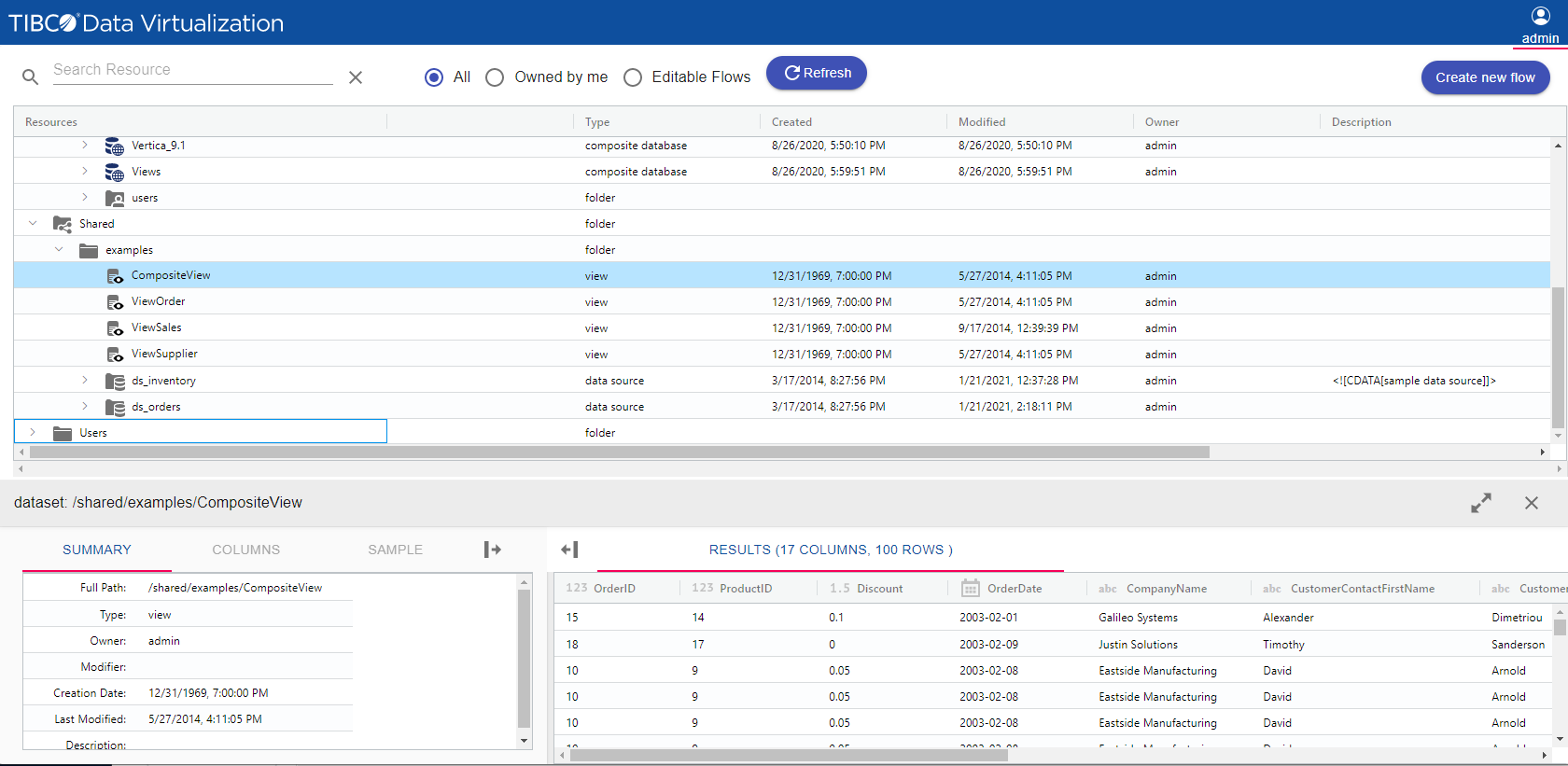

Use Cases and Deployment Scope

I am using this app to generate reports on customer orders with different kinds of statuses to understand what are the pending orders and I can analyse the same. As Croma is in the retail industry of the electronic market, we are receiving E-commerce orders from many websites like Croma.com, Tata Cliq, Amazon, Flipkart. TIBCO gives us all the data required for a better understanding of segregation and customer behaviour. We are using these data for further presentation to management to show where we are and what are the scops of improvement.

Other Software Used

IBM Sterling Order Management, SAP Customer Data Solutions, IBM Store Engagement